50 bidders. One afternoon.

A defence pack signed before close of play.

Drop in your tender pack and a folder of bidder submissions. Award IQ scores every response against 0–5 descriptor bands, links every score to verbatim quoted passages from the bidder's own response, and generates four audit-ready reports. The combined Most Advantageous Tender (MAT) winner surfaces the moment prices are entered.

Award IQ provides evaluation support. Final scores, moderation outcomes and award decisions remain with the contracting authority and evaluation panel. Panels review, adjust, and approve all outputs before award.

“Across the Manchester contract our 24/7 reactive team reduced unplanned downtime from 28 hours per month to 6 hours per month, validated by the customer's on-site facilities lead in monthly KPI reviews.”

Quote verified against bidder's submission · supports score band “named NHS contracts in last 3y with KPIs”

Illustrative output — figures and bidder names are anonymised samples.

Built for the buyer side

Twelve capabilities that compress 3–6 weeks of panel time into a single afternoon — without compromising the audit trail.

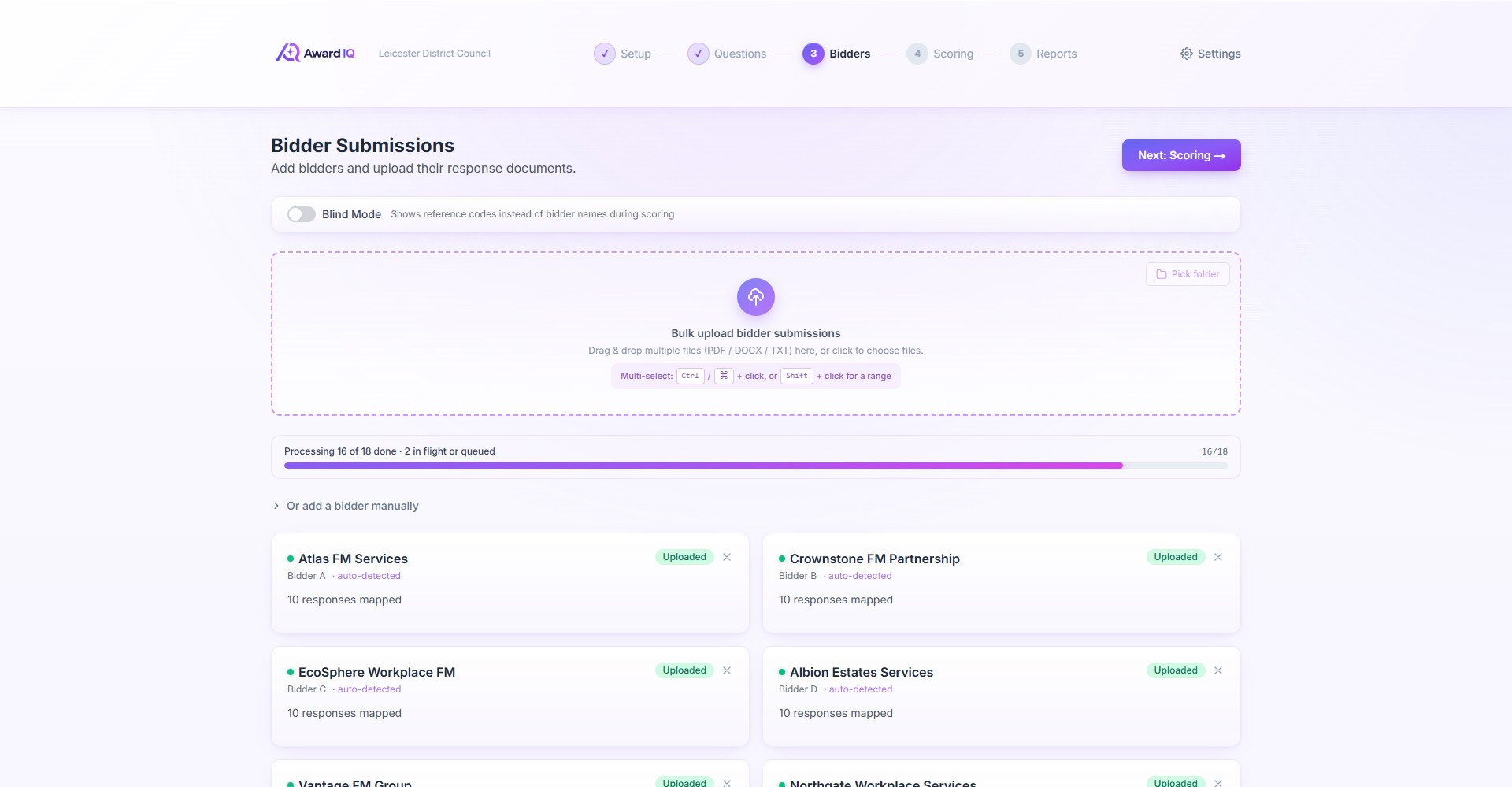

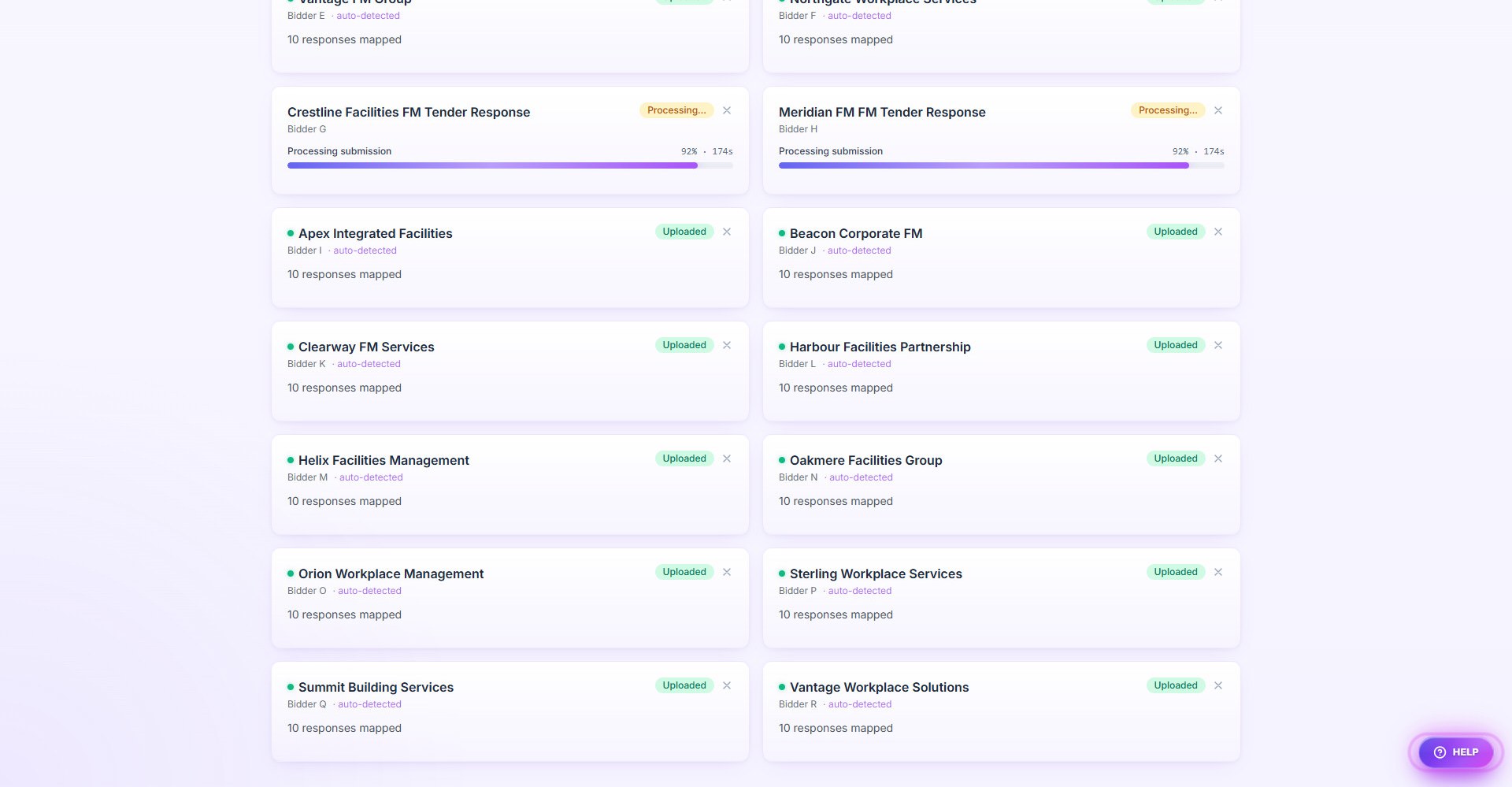

Bulk Bidder Upload

Drop a folder of 50 submissions in one go. Each bidder ingested in parallel; company name auto-detected from the cover page; charts and Gantts described in plain text so the panel sees the visual evidence.

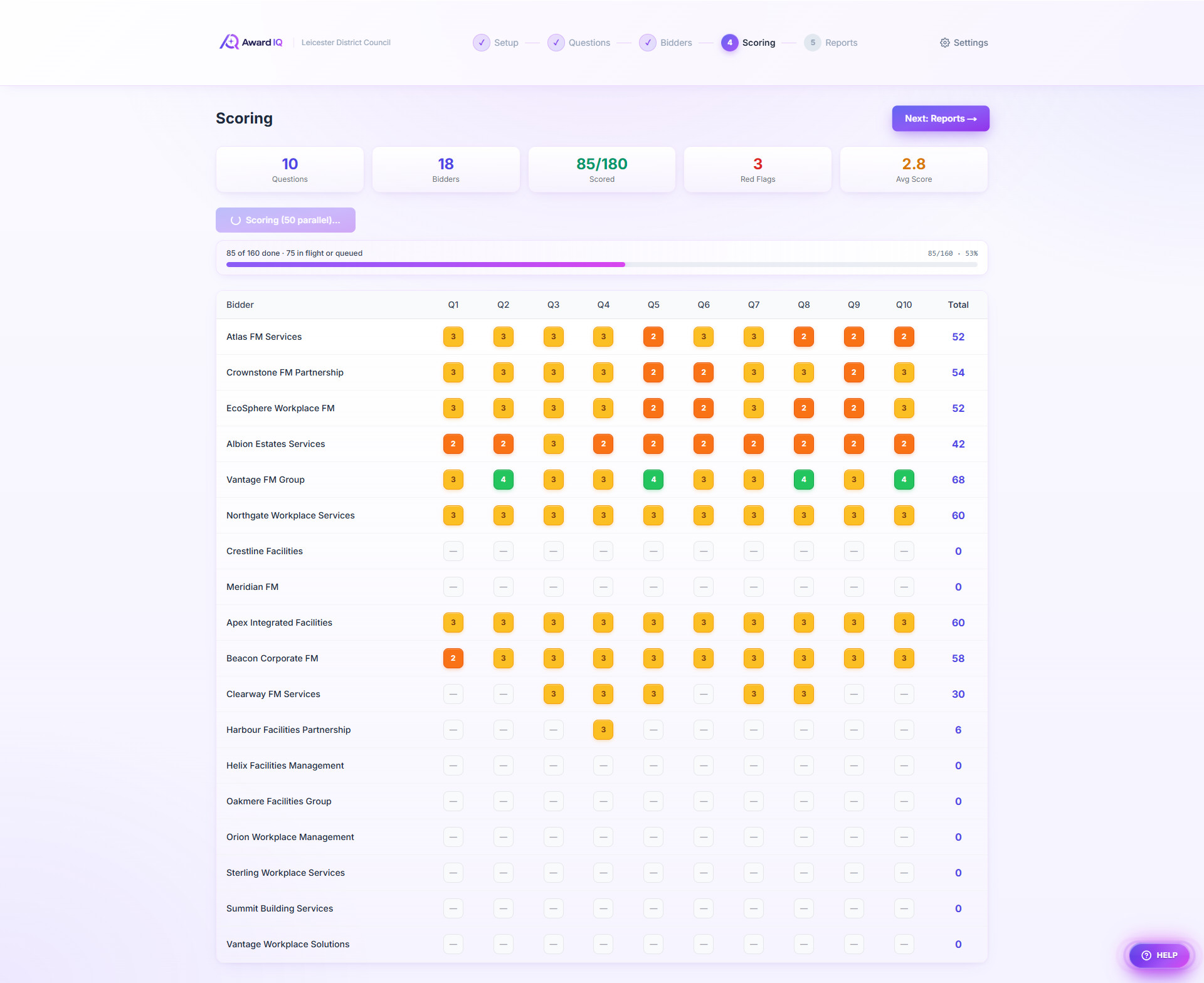

Descriptor Band Scoring

0–5 bands per response with strengths, weaknesses, three confidence ratings (evidence, sector, comparability), and weighted points. Bespoke per-question scoring criteria pulled from the tender's own scoring matrix.

Evidence-Grounded Citations

Every score links to verbatim quoted passages from the bidder's own response — not paraphrasing. Each quote is verified against the source text; fabricated citations are flagged. The audit-grade moat.

Red Flag & Clarification Detection

Unsubstantiated claims, missing evidence, vague timelines, contradictions, sector mismatches — surfaced at point of scoring, with severity ratings and ready-drafted clarification questions.

Org Calibration That Learns

Every score override becomes a worked example for next time. The system learns how YOUR panel scores — what your team calls a "4 Good" vs a "5 Excellent" — not what a generic tool would.

Blind Scoring Mode

One toggle hides bidder names — evaluators see Supplier A/B/C/D only. No unconscious bias. Per-evaluator panel members with named roles.

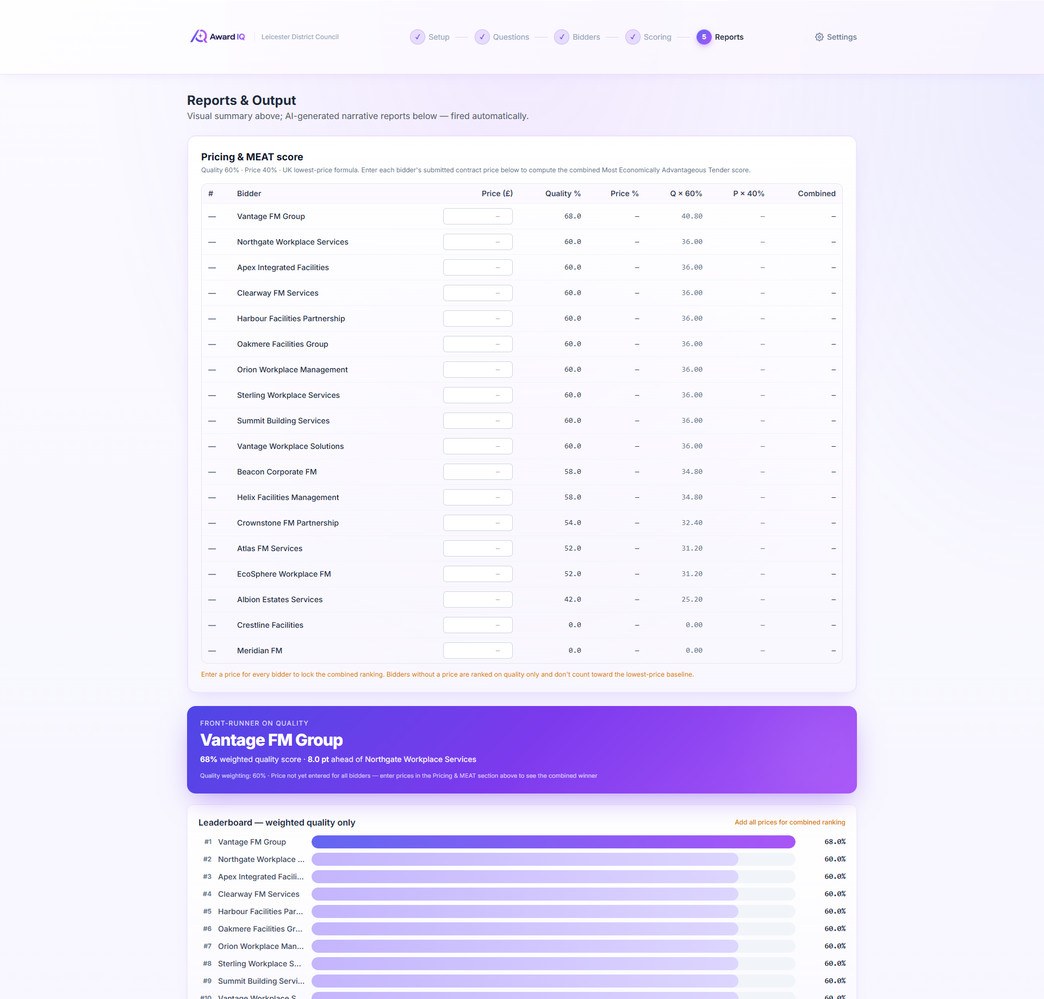

Most Advantageous Tender (MAT) Scoring

Designed for Procurement Act 2023 evaluation workflows. Enter each bidder's submitted price; combined score calculated automatically using the standard lowest-price formula plus your quality weighting. Award winner surfaced live.

Visual Award Dashboard

Front-runner banner, ranked leaderboard, confidence donut, red-flag bars, score distribution, full bidder × question heatmap. Renders the moment scoring finishes.

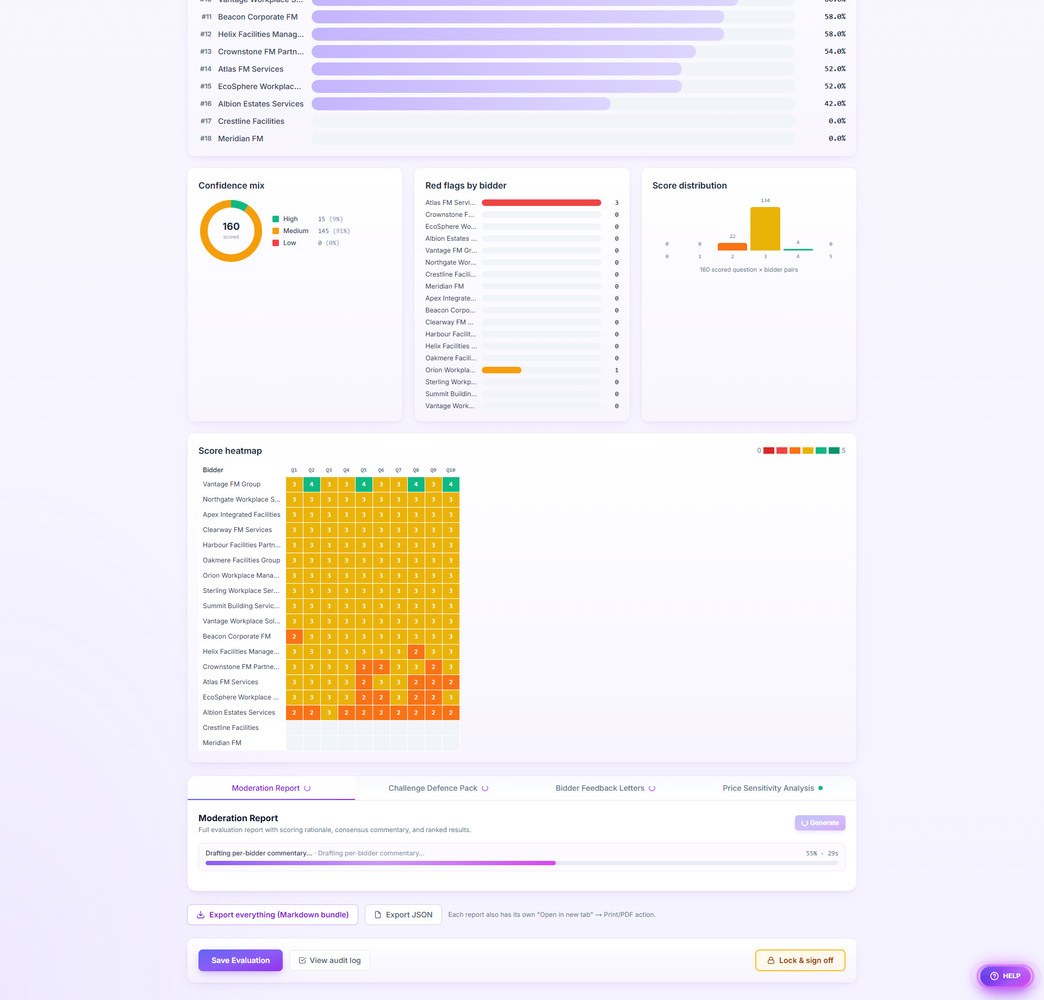

Four Reports, One Click

Moderation Report, Challenge Defence Pack, Bidder Feedback Letters, Price Sensitivity Analysis — all four auto-generate in parallel into a tabbed viewer. Each opens in its own print-ready page.

Audit Log & Lock-and-Sign

Every action recorded immutably with timestamp, evaluator name, and IP. Lock & Sign-Off freezes the evaluation with a typed signature attestation. Section 22-ready audit trail to support challenge response.

Export Everything

One click downloads a Markdown bundle with setup, panel, MEAT/MAT table, score matrix, every question with criteria, and all four reports. JSON export for full backup. Each report individually printable.

Evaluation Panel & Roles

Named panelists with roles (Lead, Evaluator, Moderator, Observer). Active evaluator stamped on every audit event. Foundation for full multi-evaluator consensus moderation.

See it work

From bulk upload to signed-off defence pack — five steps, captured from the live product.

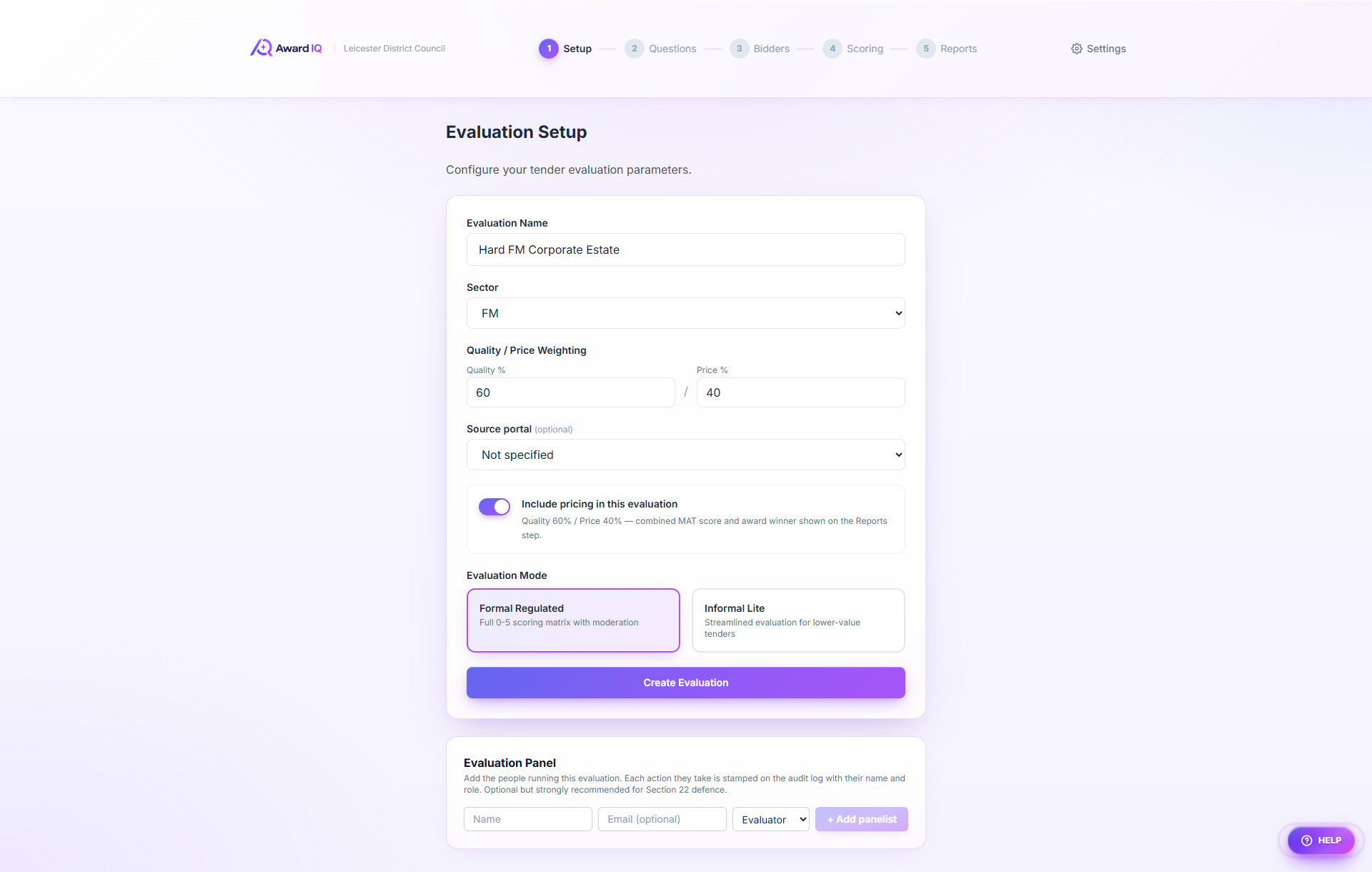

Set the rules of the evaluation

Name the evaluation, pick the sector, set quality/price weighting (built around the Procurement Act 2023 MAT split), choose Formal Regulated or Informal Lite. The Evaluation Panel section captures named evaluators and roles for the audit log.

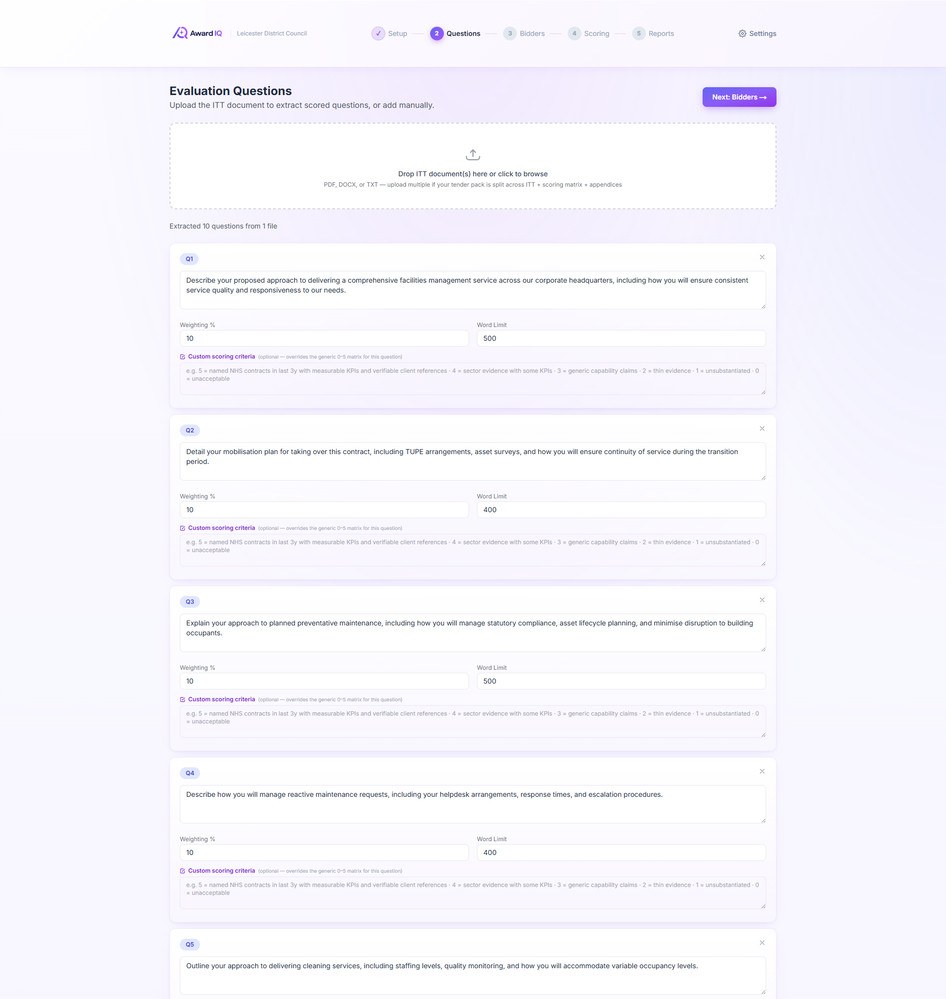

Drop the ITT — questions extracted automatically

PDF, DOCX, or TXT. The tender pack is parsed; scored questions surface as editable cards with weighting %, word limit, and per-question scoring criteria pulled directly from the tender’s scoring matrix.

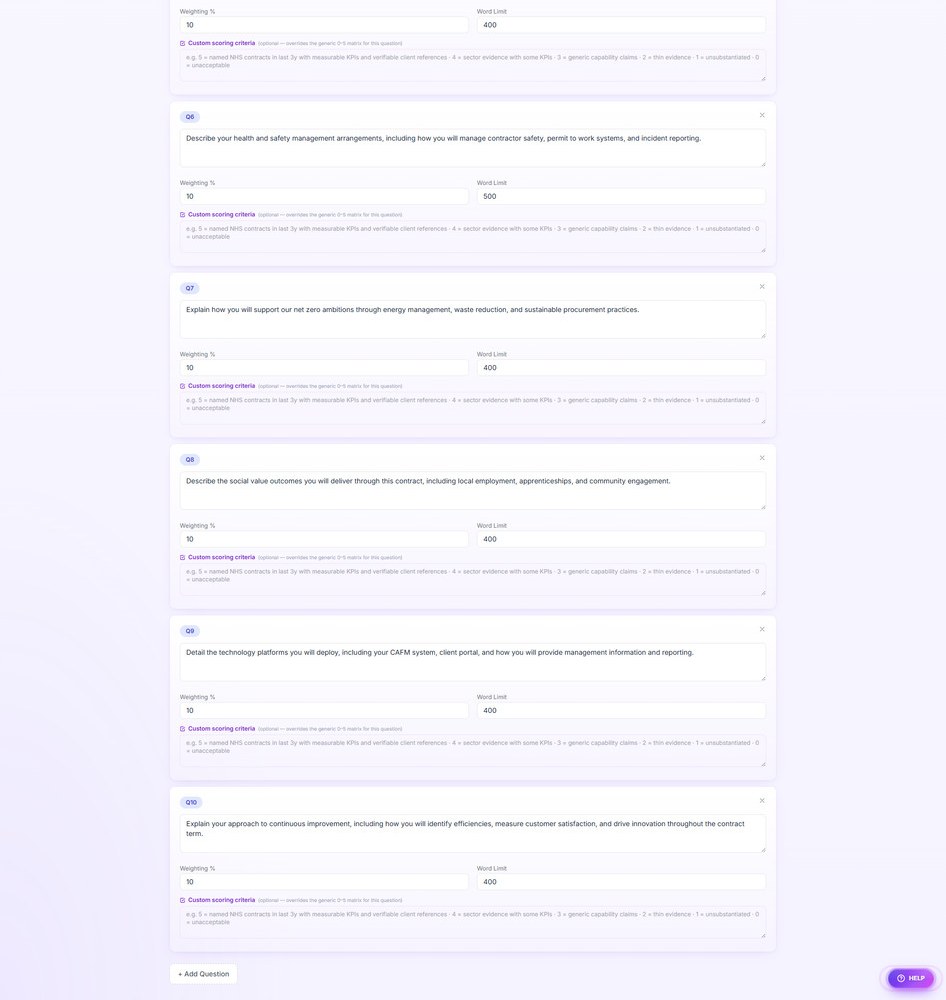

Bulk upload 50 bidder submissions in parallel

Drag a folder. Each file becomes a bidder card processing simultaneously (default 32 in flight). Company name auto-detected from the cover page; responses mapped to questions. Blind Mode hides names for unbiased scoring.

Score every response — traffic-light matrix

Auto-fires on arrival. 32 simultaneous score calls, vivid 0–5 traffic-light tiles, live progress. Click any cell for the full justification, citations, confidence ratings, red flags, and one-click override.

Dashboard, MAT winner, four reports — one screen

Pricing & MAT live ranking, front-runner banner, leaderboard, confidence donut, red-flag bars, score distribution, full bidder × question heatmap, plus a tabbed viewer for all four AI-generated reports auto-firing in the background.

Four reports — auto-generated, audit-grade

All four fire in parallel the moment scoring completes. Each opens in its own print-ready page for PDF export.

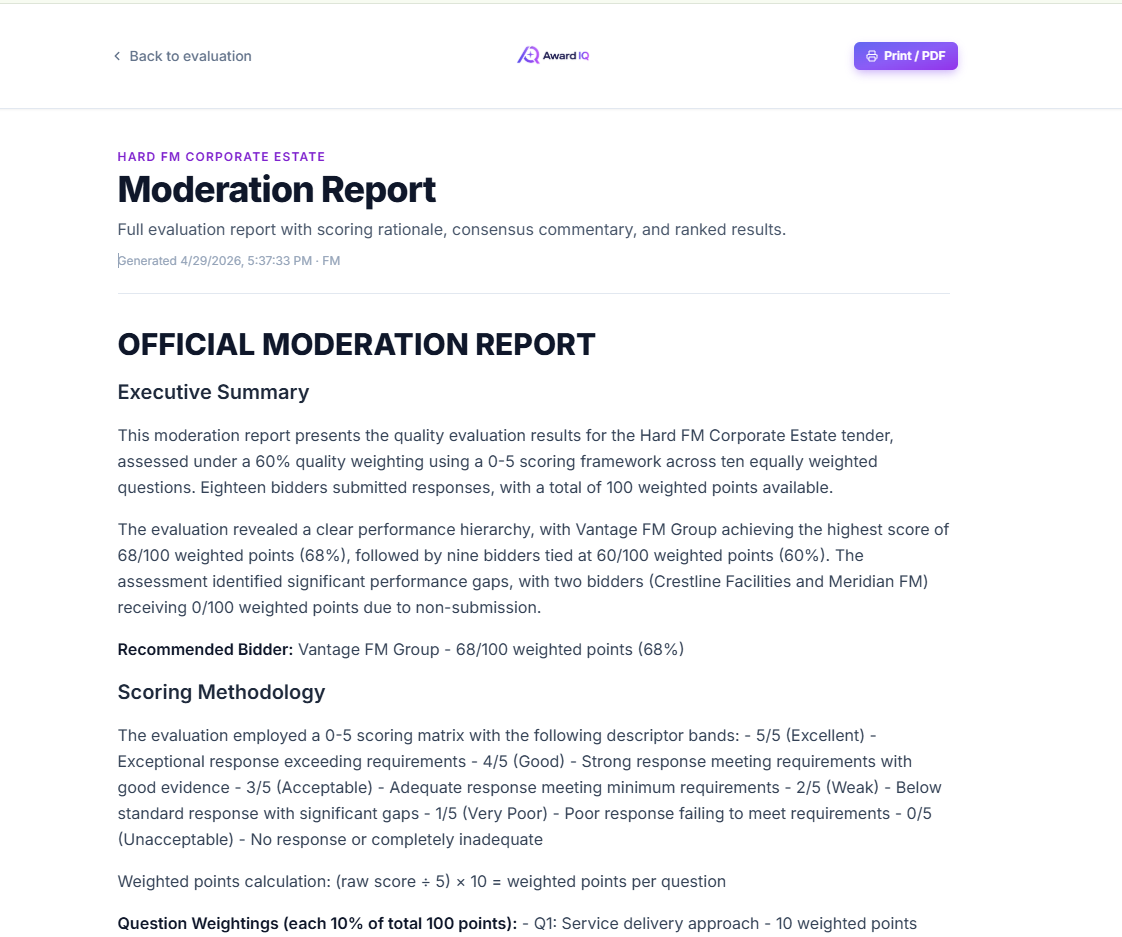

Moderation Report

Full evaluation report with scoring rationale, consensus commentary, and ranked results. Source-of-truth audit trail appendix appended automatically.

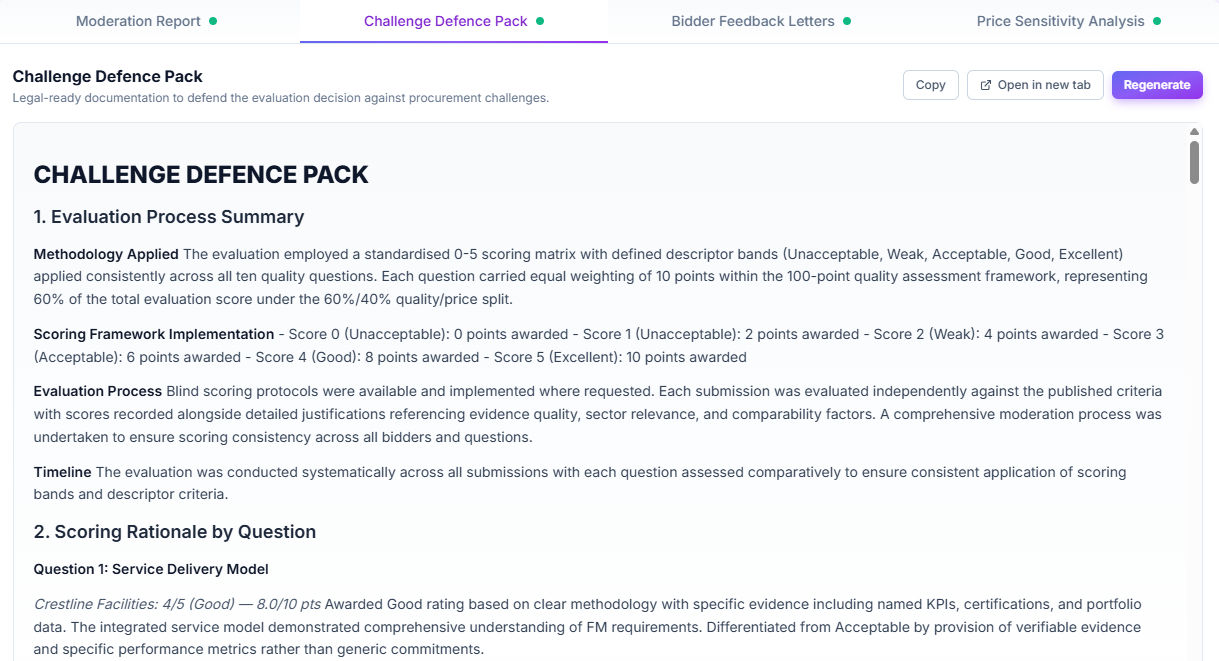

Challenge Defence Pack

Legal-ready documentation defending each evaluation decision, per-question methodology, and consistency analysis.

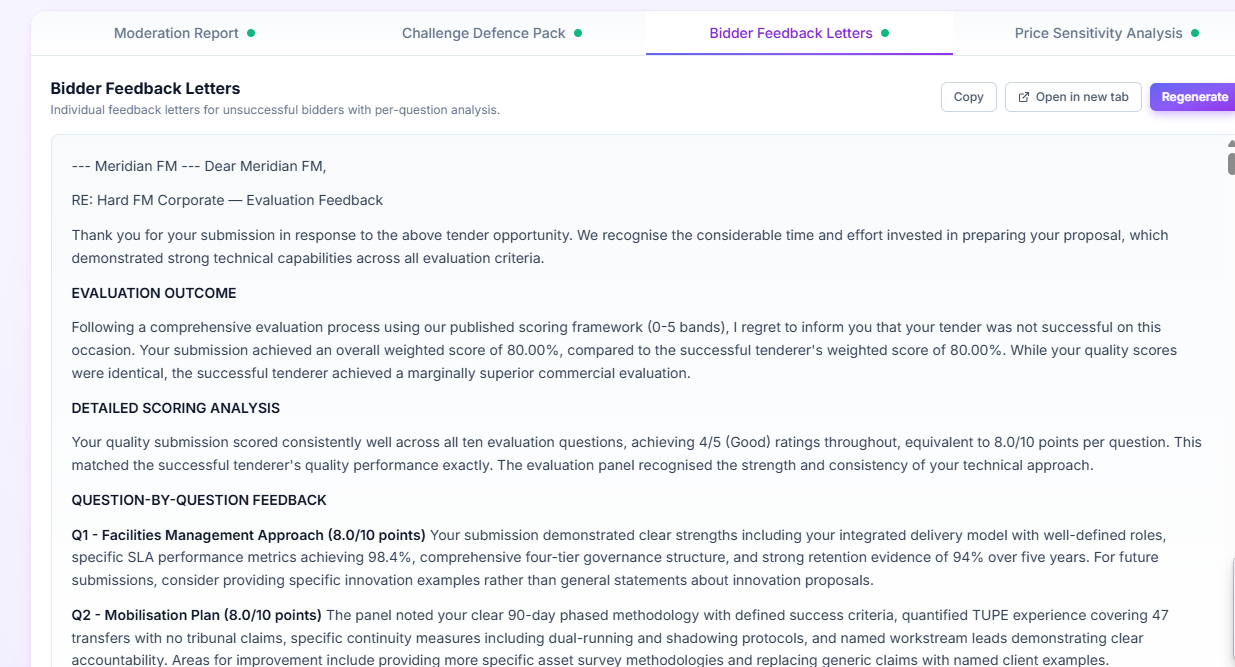

Bidder Feedback Letters

Per-bidder debrief letters for unsuccessful tenderers with score-band feedback and named-strength callouts. Procurement Act standstill placeholder built in.

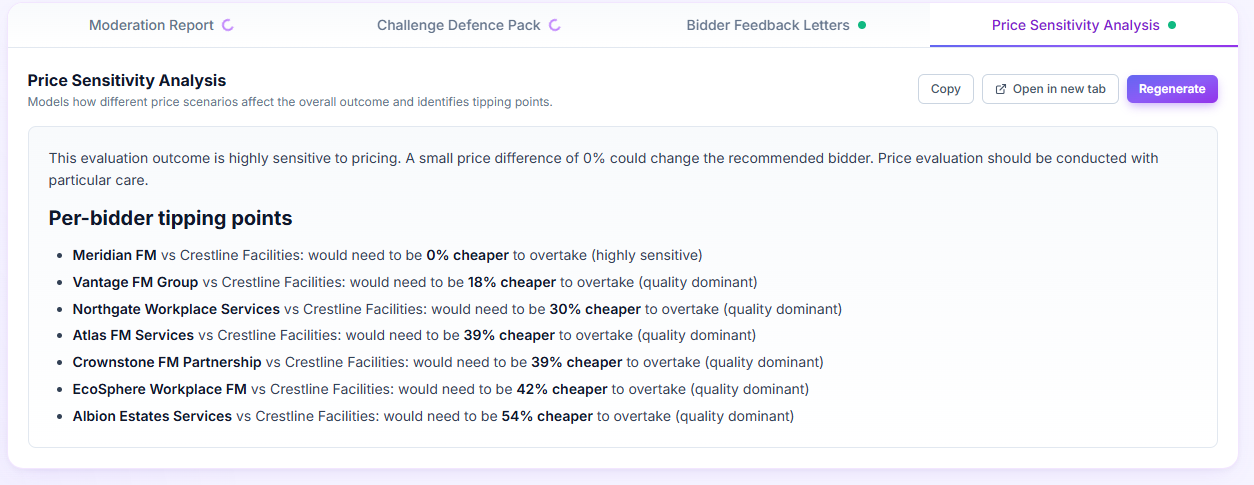

Price Sensitivity Analysis

How much cheaper would each runner-up need to be to overtake the leader? Tipping points calculated per bidder so you know how robust the award is.